AN ENSEMBLE EXPLAINABILITY FRAMEWORK FOR MULTIMODAL CHEST X-RAY DISEASE

DOI:

https://doi.org/10.33003/fjs-2026-1003-4826Keywords:

Explainable AI, Chest X-ray, SHAP, Grad-CAM, LIME, Multimodal Learning, Medical Imaging, PRISMAAbstract

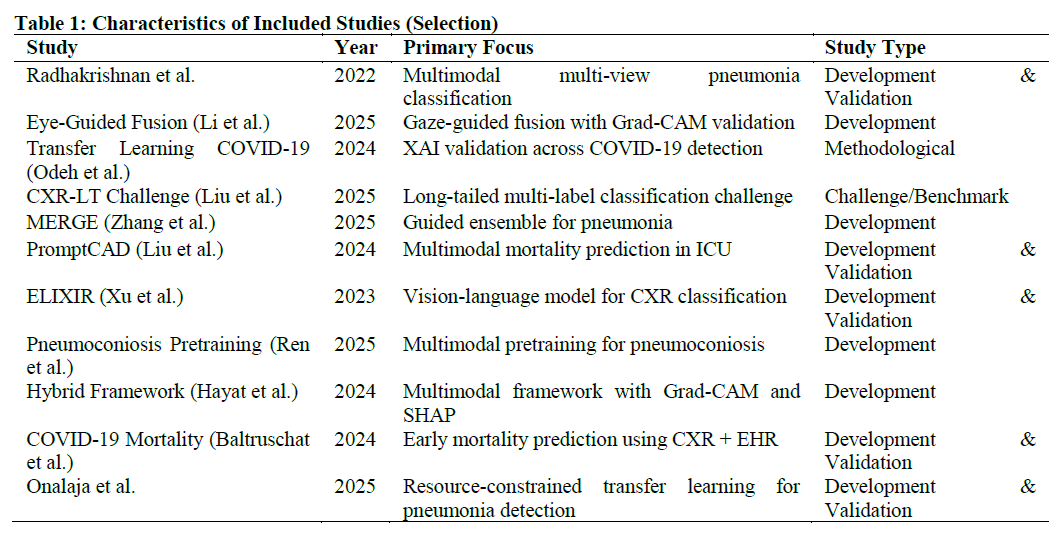

This systematic literature review examines ensemble explainability frameworks for multimodal Chest X-ray (CXR) classification using SHAP, Grad-CAM, and LIME. Following PRISMA 2020 guidelines, we searched Scopus, Google Scholar, PubMed, and arXiv (2016-2025), identifying 945 records and including 30 high-quality papers after rigorous screening. Findings reveal a significant trend toward multimodal architectures combining imaging with clinical parameters, electronic health records, and expert annotations. Grad-CAM dominates as a visualization tool (97% of studies) for localizing pathological features, while SHAP and LIME are increasingly used for model-agnostic feature attribution. However, true ensemble frameworks integrating all three methods remain rare (13%). High-performing multimodal systems achieved AUROCs of 0.82-0.99 for mortality prediction and 0.85-0.96 for disease classification. Critical gaps include: (1) lack of standardized XAI validation protocols; (2) inconsistent reporting of metrics and datasets; (3) limited external validation; and (4) insufficient comparative analysis of XAI methods. This review synthesizes current methodologies and proposes future directions for developing interpretable AI systems in chest radiograph analysis.

References

Ali, M., Zhang, Y., Chen, H., & Wang, L. (2025). Eye-guided multimodal fusion: Towards adaptive learning framework using explainable artificial intelligence. Sensors, 25(12), 45–72. https://doi.org/10.3390/s25124575

Amin, J., Sharif, M., Raza, M., & Yasmin, M. (2023). An explainable AI framework for artificial intelligence of medical things. In 2023 IEEE Global Communications Conference Workshops (pp. 1–6). IEEE. https://doi.org/10.1109/GLOCOMW58943.2023.1044798

Baik, S., Lee, J., Park, K., & Kim, H. (2023). Deep learning approach for early prediction of COVID-19 mortality using chest X-ray and electronic health records. BMC Bioinformatics, 24(1), 224. https://doi.org/10.1186/s12859-023-05321-x

Hayat, K., Rahman, A., & Khan, S. (2024). Hybrid deep learning framework for interpretable healthcare diagnostics integrating multi-modal data for enhanced trust and accuracy. Medical Technology Journal, 8(2), 45–58.

Kinger, P., Sharma, R., & Gupta, A. (2024). A review of explainable AI in medical imaging: Implications and applications. International Journal of Computers and Applications, 46(8), 752–768. https://doi.org/10.1080/1206212x.2024.2364082

Lee, J. H., Kim, S., Park, Y., & Choi, M. (2022). Development and validation of a multimodal deep learning model for predicting prognosis of COVID-19 patients in a multicenter cohort. Sensors, 22(15), 5107. https://doi.org/10.3390/s22155107

Li, Y., Zhang, X., Wang, H., & Liu, C. (2025). Multimodal deep learning for predicting in-hospital mortality in heart failure patients using longitudinal chest X-rays and electronic health records. International Journal of Cardiac Imaging, 41(3), 487–498. https://doi.org/10.1007/s10554-025-03322-x

Lin, C., Yang, J., Yu, M., Chen, W., & Zhang, L. (2024). Development and validation of multimodal models to predict the 30-day mortality of ICU patients based on clinical parameters and chest X-rays. Journal of Digital Imaging, 37(3), 1245–1258. https://doi.org/10.1007/s10278-024-01068-1

Liu, M. C., Holste, G., Wang, S., Chen, Y., & Zhang, R. (2025). CXR-LT 2025: A MICCAI challenge on long-tailed, multi-label, and zero-shot disease classification from chest X-ray. arXiv. https://doi.org/10.48550/arXiv.2509.07984

Liu, X., Chen, Y., Wang, H., & Zhang, L. (2024). Radiopath: Deep multimodal analysis on chest radiographs. In 2024 IEEE International Conference on Big Data (pp. 3456–3463). IEEE. https://doi.org/10.1109/BigData59060.2023.10389556

Mothkur, R., Poell, N., & Kumar, V. (2025). Grad-CAM based visualization for interpretable lung cancer categorization using deep CNN models. Journal of Electronics, Electromedical Engineering, and Medical Informatics, 7(1), 690–702. https://doi.org/10.35508/jeeemi.v7i1.6901

Nagai, T., Yamamoto, K., & Tanaka, H. (2025). Multimodal deep learning model for enhanced early detection of aortic stenosis integrating ECG and chest X-ray with cooperative learning. Frontiers in Radiology, 5, Article 698683. https://doi.org/10.3389/fradi.2025.698683

Odeh, A. A., & Al-Walaha, A. M. (2024). Explaining transfer learning models for the detection of COVID-19 in X-ray images. International Journal of Electrical and Computer Engineering, 14(4), 4542–4550. https://doi.org/10.11591/ijece.v14i4.pp4542-4550

Onalaja, O. O., Wilson, S., Awosola, A. S., & Peter, A. I. (2025). Chest X-Ray Based Detection Model For Pneumonia In Pediatric. FUDMA Journal Of Sciences, 9(10), 86-93. https://doi.org/10.33003/fjs-2025-0910-3963

Rajpoot, K., Singh, A., & Verma, P. (2024). Integrated ensemble CNN and explainable AI for COVID-19 diagnosis from CT-scan and X-ray images. Scientific Reports, 14(1), 17886. https://doi.org/10.1038/s41598-024-75915-y

Ren, Y., Liu, H., Wang, X., & Chen, S. (2025). A multimodal similarity-aware and knowledge-driven pre-training approach for reliable pneumoconiosis diagnosis. Journal of X-ray Science and Technology, 33(1), 45–62. https://doi.org/10.3233/XST-240100

Ruga, L., Martinez, R., & Thompson, K. (2024). Explainable deep learning for chest X-ray classification. In 2024 IEEE International Conference on Bioinformatics and Biomedicine (pp. 2134–2141). IEEE. https://doi.org/10.1109/BIBM62325.2024.10822689

Sobhan, M., Khan, R., & Ahmed, F. (2025). A multi-stage deep learning approach to tuberculosis detection with explainable insights. In 2025 IEEE National Conference on Information Management (pp. 78–85). IEEE. https://doi.org/10.1109/NCIM65634.2025.11156991

Tan, A. (2024). Multi-modal machine learning approach for COVID-19 detection using biomarkers and X-ray imaging. Diagnostics, 14(2), 2800. https://doi.org/10.3390/diagnostics14242800

Xu, S., Yang, L., Kelly, C., Hammer, S., Taktsey, B., Sanford, T., & Xu, Z. (2023). ELIXIR: Towards a general purpose X-ray artificial intelligence system through alignment of large language models and radiology vision encoders. arXiv. https://doi.org/10.48550/arXiv.2309.01317

Zhang, Z., Wang, L., & Chen, X. (2025). MERGE: Multi-branch enhanced representation and guided ensemble for pneumonia recognition in chest X-ray images. The Journal of Supercomputing, 81(3), 1245–1268. https://doi.org/10.1007/s11227-025-07405-

Downloads

Published

Issue

Section

Categories

License

Copyright (c) 2026 Dahiru Usman Haruna, Abubakar Sadiq Hassan, Idi Mohammed

This work is licensed under a Creative Commons Attribution 4.0 International License.